You Have an Audit Trail. It Is Not Enough.

Your engineering team has done the responsible thing. They have added logging to the AI pipeline. Every inference call is recorded. Every model prediction is written to a database table. Every input and output is captured in structured logs.

When someone asks "Do we have an AI audit trail?" the answer is yes. When the compliance team asks "Can we trace AI decisions?" the answer is yes. When a customer asks "Do you log AI activity?" the answer is yes.

But when an EU AI Act regulator asks "Can you prove the integrity of your AI decision records?" the answer, for the vast majority of organizations, is no.

This is the gap that most organizations do not recognize until it is too late. There is a fundamental difference between logging AI decisions and proving AI decisions. Standard audit trails do the first. The EU AI Act requires the second.

What Article 12 Actually Says

Article 12 of the EU AI Act establishes the record-keeping requirements for high-risk AI systems. The specific language is important:

High-risk AI systems shall technically allow for the automatic recording of events ('logs') over the duration of the lifetime of the system.

Logging capabilities shall provide, at a minimum, the following:

- Recording of the period of each use of the system

- The reference database against which input data has been checked

- The input data for which the search has led to a match

- The identification of the natural persons involved in the verification of the results

But Article 12 does not exist in isolation. It connects to Article 19 (quality management system), Article 20 (automatically generated logs), and Article 61 (post-market monitoring). Read together, these articles create requirements that go well beyond what standard logging provides.

The European Commission's guidance on implementing these articles emphasizes three properties that audit records must possess:

- Traceability: The ability to reconstruct any AI decision from input to output, including the specific model version, data references, and processing steps used

- Integrity: Assurance that records have not been modified, deleted, or inserted after the fact

- Completeness: Coverage of the full decision lifecycle, including governance checks, human oversight actions, and post-decision monitoring events

Standard database logs, application logs, and CSV exports fail on at least one — and usually all three — of these properties.

The Five Failures of Standard AI Audit Trails

Failure 1: Mutable Records

The most fundamental failure is mutability. Standard audit trails are stored in databases, log files, or cloud storage systems where records can be modified.

Consider a typical implementation: AI inference results are written to a PostgreSQL table. Each row contains a timestamp, input data, model version, and output. This seems reasonable until you consider that:

- Any database administrator can execute an

UPDATEorDELETEstatement that modifies or removes records - Application-level code can overwrite records through normal CRUD operations

- Database backups can be restored selectively, replacing current records with historical versions

- There is no mechanism to detect whether a record has been modified since it was created

A regulator examining a mutable audit trail has no way to distinguish between records that were created contemporaneously with the AI decisions they describe and records that were fabricated or modified after the fact.

This is not a theoretical concern. Regulatory enforcement in financial services and pharmaceutical manufacturing has repeatedly uncovered organizations that retroactively modified audit records. Regulators are trained to look for this.

Failure 2: No Integrity Verification

Standard audit trails provide no mechanism to confirm that a specific record existed at a specific point in time, that its content has not changed since creation, or that no records have been deleted or inserted out of sequence.

Without integrity verification, an audit trail is a claim, not evidence. "We logged everything" is a statement of intent. Regulators need proof. Financial services regulations (MiFID II, SOX) addressed this decades ago by requiring tamper-evident systems. The EU AI Act applies the same principle to AI.

Failure 3: Missing Decision Context

Standard logging captures the mechanics of an AI decision: what data went in, what prediction came out. It does not capture the context of the decision: why the decision was made, what governance checks were applied, what alternative outcomes were considered, and how the decision fits into the broader operational workflow.

Article 12 requires logs that support "traceability of the functioning of the AI system throughout its lifecycle." Traceability requires context. A log entry that says "Model XGBoost-v3.2 predicted class A with confidence 0.87 for input record #4521" is a mechanical record. It does not answer:

- What business decision did this prediction inform?

- What governance policies were evaluated before this prediction was served to a user?

- Was a human reviewer involved? If so, did they accept or override the prediction?

- What risk thresholds were applied? Did this prediction exceed any thresholds?

- What was the evidence level of this decision record?

Without this context, a regulator cannot determine whether the AI system operated within its intended purpose and design constraints. The log proves the system ran. It does not prove the system operated correctly within a governance framework.

Failure 4: No Governance Proof

The EU AI Act requires that AI systems operate within a governance framework. Articles 9, 14, and 17 all require evidence that governance processes are functioning.

Standard audit trails record AI outputs but not governance activities. They cannot prove that a decision passed through required governance checkpoints, that human oversight was applied when required, that risk thresholds were evaluated, or that required approvals were obtained.

If your audit trail cannot prove that governance processes were applied to AI decisions, regulators will conclude they were not applied, regardless of what your policy documents say.

Failure 5: No Regulatory Export Format

When a regulator requests audit records, they need them in a format that supports systematic review. Standard audit trails typically export as:

- Raw database dumps (SQL)

- CSV files

- Application log files (JSON lines, syslog format)

- PDF reports generated from dashboards

None of these formats are designed for regulatory analysis. They require significant manual processing and lack the metadata necessary for automated regulatory assessment tools that supervisory authorities are increasingly deploying. Organizations that can only export raw logs will face higher scrutiny costs and longer assessment timelines.

The Difference Between Logging and Proving

The distinction between logging and proving is conceptual, not just technical. Here is how they compare across the dimensions that regulators evaluate:

| Dimension | Logging | Proving |

|---|---|---|

| Record integrity | Records can be modified without detection | Any modification is cryptographically detectable |

| Completeness verification | Cannot confirm no records were deleted | Gaps in the record chain are mathematically identifiable |

| Temporal proof | Timestamps can be changed after the fact | Timestamps are embedded in hash calculations |

| Decision context | Captures inputs and outputs only | Captures governance checks, human actions, and policy evaluations |

| Governance evidence | Not captured | Automatically recorded at each decision point |

| Regulatory export | Raw data requiring manual processing | Structured format designed for regulatory review |

| Provenance | Self-reported ("we logged this") | Cryptographically verifiable ("the hash chain proves this") |

Logging answers: "What did the AI system do?"

Proving answers: "What did the AI system do, under what governance framework, with what human oversight, and here is the cryptographic proof that this record has not been tampered with."

What a Compliant AI Audit Trail Requires

Based on the EU AI Act's requirements and emerging enforcement guidance, a compliant AI audit trail must possess these six properties:

Property 1: Immutability

Records, once written, cannot be modified or deleted. This is not just a policy ("we do not delete records"). It is a technical guarantee. The storage mechanism must make modification detectable.

Property 2: Tamper Evidence

If someone attempts to modify a record — whether through malice, error, or system failure — the audit trail must make the modification visible. Tamper evidence means that the audit trail itself reveals when it has been compromised.

Property 3: Sequential Integrity

Records must be demonstrably ordered. It must be provable that record N was created after record N-1 and before record N+1. This prevents retroactive insertion of fabricated records.

Property 4: Decision Context

Each record must include not just the AI system's input/output but the full decision context: governance checks applied, human review status, risk thresholds evaluated, evidence level, and any approvals obtained.

Property 5: Verifiability

Any party — internal auditors, external regulators, independent assessors — must be able to independently verify the integrity of the audit trail without relying on the organization's assertions.

Property 6: Structured Export

The audit trail must be exportable in a machine-readable, structured format that enables systematic regulatory review. The format should include sufficient metadata for automated analysis.

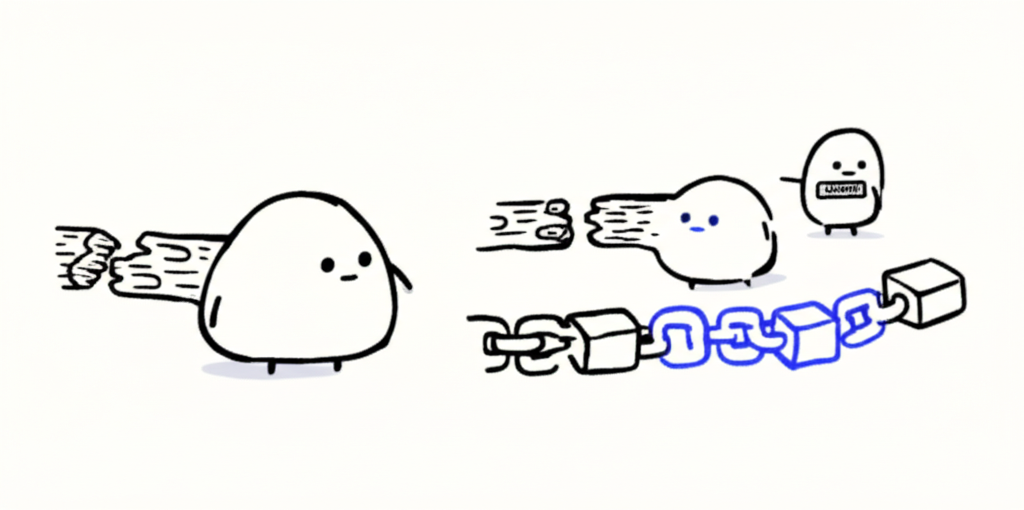

How Hash Chains Solve the Integrity Problem

Cryptographic hash chains are the established solution for creating immutable, tamper-evident records. The concept is straightforward:

- First record: Record R1 is created. Its content is hashed using SHA-256 (or similar), producing hash H1.

- Second record: Record R2 is created. Its hash H2 is computed from R2's content plus H1. This means H2 depends on both R2 and the entire history before it.

- Nth record: Record RN's hash HN is computed from RN's content plus HN-1. This creates a chain where every record is cryptographically linked to every record that came before it.

This creates three properties automatically:

- Immutability: If any record is modified, its hash changes. Because subsequent hashes depend on predecessors, every hash after the modified record also changes. Tampering with a single record breaks the entire subsequent chain.

- Sequential integrity: Records are provably ordered because each hash incorporates its predecessor. You cannot insert a record between R5 and R6 without changing H6 and every hash that follows.

- Completeness: Deleting a record breaks the chain at the deletion point. The gap is mathematically detectable.

Hash chains have been used in financial services for regulatory record-keeping for over a decade. They are the foundation of blockchain technology (without the distributed consensus overhead). And they are exactly what the EU AI Act's integrity requirements demand.

Beyond Hashing: What a Complete Solution Includes

Hash chains solve the integrity problem, but a compliant AI audit trail requires more. The complete solution adds:

- Governance layer: Before recording a decision, the system evaluates governance policy satisfaction — human review, risk thresholds, approval levels — and records the results inside the hash chain

- Evidence levels: Records track maturity (draft, documented, audit-ready) with enforcement rules about when records can be promoted or locked

- Append-only storage: SQL-level protections prevent UPDATE and DELETE operations, providing defense-in-depth alongside hash chain integrity

- Structured export: Semantic formats that map to EU AI Act requirements, enabling automated regulatory analysis rather than manual review of raw data dumps

How Cronozen's Audit Trail Meets Every Requirement

Cronozen's Decision Proof Unit (DPU) implements a SHA-256 hash chain audit trail that addresses all six compliance properties:

- Immutability: Every decision record is hashed using SHA-256, with each hash computed from the record content, the previous hash, and the timestamp. The function

computeChainHash(content, previousHash, timestamp)creates an unbreakable cryptographic chain from the genesis record onward. - Tamper evidence: Modifying any record in the chain causes all subsequent hashes to fail verification. The chain is self-auditing — integrity can be verified by any party at any time by recomputing hashes from the genesis record.

- Decision context: DPU records capture far more than inputs and outputs. Each record includes the five governance checks (policy existence, evidence level, human review, risk threshold, dual approval), the decision rationale, and the complete processing context.

- Evidence progression: Records move through three stages — DRAFT (initial capture), DOCUMENTED (structured and validated), and AUDIT_READY (reviewed and locked). Once a record reaches AUDIT_READY status, it cannot be modified. Any attempt to modify a locked record breaks the hash chain.

- Append-only SQL: The database layer enforces append-only semantics with SQL-level protections. Twelve distinct event types (creation, review, approval, escalation, and eight others) provide granular traceability across the decision lifecycle.

- JSON-LD v2 export: All audit records are exportable in a standardized format at

schema.cronozen.com/decision-proof/v2. This structured schema maps directly to EU AI Act Article 12 requirements and is designed for machine-readable regulatory submission.

The difference is simple: standard audit trails require regulators to trust your logs. Cronozen's DPU provides cryptographic proof that your logs are trustworthy.

Ready to upgrade from AI logging to AI proving? Book a Demo to see how Cronozen's hash chain audit trail provides the compliance evidence that regulators require.